What AI Changed About Software IP

AI did not erase software IP. It forced leaders to separate trade secrets and confidentiality duties from code that n...

13 min read

04.05.2026, By Stephan Schwab

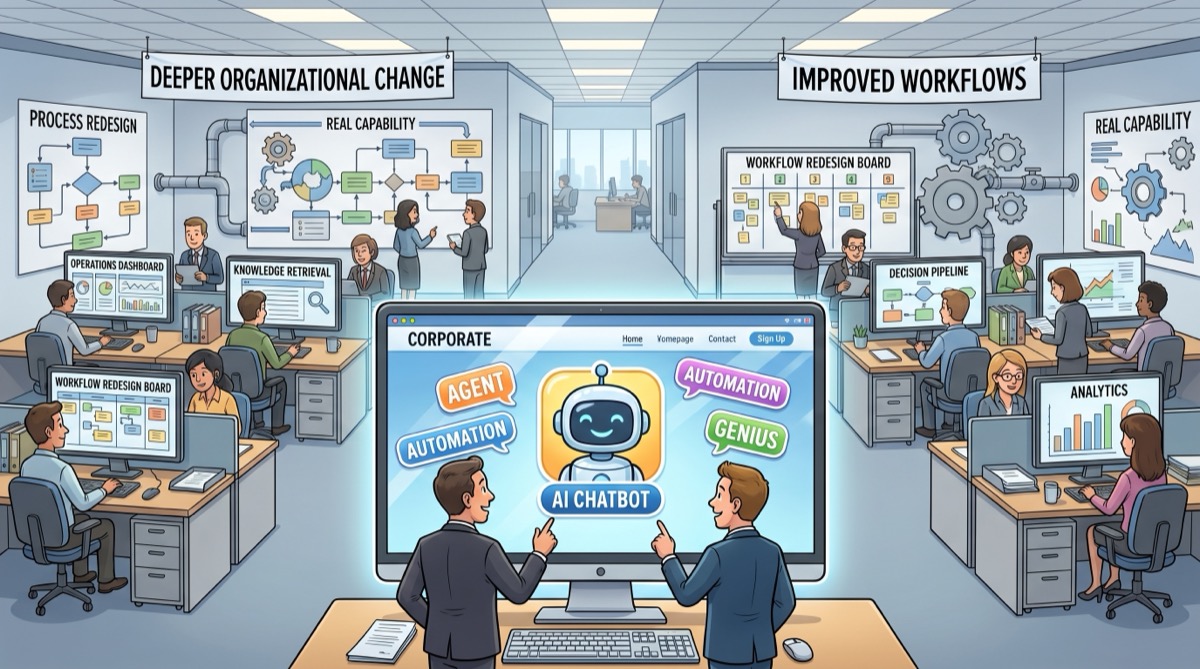

Putting a chatbot on your website does not mean your company is using AI in any meaningful sense. It means you put a chatbot on your website. The same goes for workflow automation with a little language model dust sprinkled on top. Real AI leverage changes how decisions get made, how work gets done, and what the organization can do that it could not do before. That starts with naming what AI actually is, because the two letters now get attached to almost anything that blinks.

Most public discussion treats AI as if it were one thing. It isn’t. The label now covers a messy range of technologies that do very different jobs.

Sometimes AI means a statistical model that predicts churn, fraud, demand, or maintenance risk. Sometimes it means computer vision classifying images. Sometimes it means recommendation systems ranking likely choices. Sometimes it means large language models that generate text, summarize documents, answer questions, write code, or coordinate work across tools.

Those systems have one thing in common: they infer patterns from data and produce outputs that are not just a hard-coded chain of if-then rules. That does not make them magical. It means they can handle classes of problems where the world is too messy for rigid hand-written logic alone.

This is where public language falls apart. People say “AI” when they mean automation. They say “AI” when they mean machine learning. They say “AI” when they mean a chatbot. They say “AI” when they mean a vendor add-on that writes polite email drafts. By that point the term has so many meanings that it stops saying much.

For leadership, the practical definition is simpler: AI is useful when a system helps people or processes deal with ambiguity, pattern recognition, messy language, large knowledge sets, or probabilistic decisions in a way that changes actual outcomes. If all you did was add a conversational wrapper around a FAQ, you did not suddenly become an AI-powered company.

This is the first thing leadership needs to separate. A chatbot is a surface. AI adoption is an operational capability.

A chatbot can be useful. It can reduce simple support load, route inquiries, collect leads, or answer repetitive questions. Fine. None of that proves your company has meaningfully changed how it learns, decides, delivers, or serves customers.

A help widget that answers shipping questions is not the same thing as using AI to improve pricing decisions, accelerate proposal work, detect failure patterns, support internal knowledge retrieval, improve customer onboarding, or help teams make better decisions with less delay.

One is a feature. The other is a capability.

Too many companies buy the feature and announce the capability.

This confusion is older than the current AI wave. For years, companies automated form routing, invoice handling, notifications, CRM updates, ticket assignment, and approval chains. Useful work. Occasionally very useful work.

But automation and AI are not interchangeable ideas.

Classic automation is about predefined steps: if this happens, do that. Route this request. Copy that field. Trigger this email. Move that record. It reduces manual effort in repeatable processes.

AI becomes interesting when the work involves ambiguity, pattern recognition, summarization, retrieval, prioritization, generation, probabilistic judgment, or interaction with messy human language and messy human context.

Even then, not every AI use is valuable. A badly placed language model can be nothing more than an expensive random text generator glued onto a process that used to be stable.

So no, buying automation tooling does not automatically mean your company is leveraging what AI can actually offer. It may just mean you modernized your plumbing.

A lot of the current AI language is sales language, not operating language.

Suddenly every workflow tool has agents. Every chatbot is intelligent. Every assistant is autonomous. Every rules engine has been reborn with a dramatic new name and a larger invoice.

This is normal vendor behavior. The problem starts when leadership mistakes category inflation for actual capability.

If a vendor says they provide “AI agents,” ask what the thing actually does.

Those are not semantic details. That is the difference between buying a label and buying a capability.

The same goes for internal politics. Slapping “AI” onto a roadmap item is an easy way to look current without changing much of anything. A chatbot launch photographs well. Process redesign does not. One gets applause. The other creates durable advantage.

The word “agent” is especially useful for this kind of inflation because it smuggles in a story. The word makes the software sound like a responsible actor with goals, initiative, and judgment. That framing is commercially convenient. It also quietly raises expectations far beyond what most of these systems can actually do.

If leadership wants to know whether the company is actually using AI well, stop looking at the demo and look at the operating model.

Real leverage usually shows up in places like these:

Notice what these examples have in common. They are not mostly about a public-facing gimmick. They are about changing how the organization works.

That is the useful question: where did AI increase the company’s range?

Did it help your people make better decisions? Did it reduce delay? Did it lower the cost of dealing with complexity? Did it improve quality under real operating conditions? Did it enable something that used to be impractical?

If the answer is no, then you may have purchased AI-flavored decoration.

Leadership teams are under pressure to show movement. Boards ask about AI. Investors ask about AI. Customers ask about AI. Suddenly every executive deck needs a slide proving the company is not asleep.

A chatbot is convenient because it is easy to point at.

“There. We did AI.”

That sentence flatters exactly the wrong instinct in the organization. It rewards visibility over substance. It confuses customer-facing novelty with internal capability. It turns a strategic question into a branding exercise.

Meanwhile, the harder work sits in the background: fixing knowledge fragmentation, redesigning workflows, exposing useful data, establishing feedback loops, tightening governance, and figuring out where probabilistic systems help and where they create fresh risk.

That work is less glamorous. It is also where the value lives.

If you want to cut through the theater, ask a short set of brutal questions:

Those questions force the conversation back to outcomes.

A serious AI initiative should change how work moves through the company. It should produce evidence. It should have boundaries, ownership, and visible tradeoffs. It should not survive on applause alone.

That is the useful definition. Not a digital colleague. Not a machine employee. Not a synthetic executive. Just software with a bit more initiative than a one-shot command.

In practice, most so-called agents do some mix of these things:

That can be useful. It is also much narrower than the public story built around the word.

The important technical detail, explained in normal language, is that the large language model itself is usually stateless. It does not wake up in the morning remembering your company, your customer, or the last meeting. Each time it is called, it receives a package of context and produces a response. Then that moment is over.

What makes the system look continuous is the ordinary software wrapped around the model.

That surrounding software is what makes the “agent” appear to remember, plan, and act over time. The model provides the language and pattern inference. The rest is normal software: storage, retrieval, orchestration, permissions, logging, and user interface.

This matters because it cuts through the magic trick. When someone says, “the agent knows our business,” what they usually mean is that a conventional software stack retrieved the relevant information and handed it to a stateless model at the right moment.

The reason vendors love “agent” is simple: it sounds like a unit of labor. It suggests initiative, delegation, and relief. It whispers that you might buy software instead of fixing process, training people, or hiring capability. That is why the label spreads faster than the reality.

If you want a broader leadership lens for this kind of confusion, read why non-technical leaders keep underestimating software complexity. The same mistake shows up here: people confuse a visible surface with the deeper system underneath.

This is not stupidity. It is normal human perception.

People anthropomorphize. We attribute agency, intention, confidence, and competence to anything that behaves like a social actor. A system that answers in full sentences, remembers part of a conversation, and appears to act on its own triggers exactly that response. The old ELIZA effect never went away. The models just got better at provoking it.

And because the memory-looking part is stitched together by surrounding software, the illusion becomes stronger. The user sees continuity. They do not see the database fetch, the prompt assembly, the retrieval step, the policy checks, or the workflow engine behind the curtain. They see one fluent surface and assume one coherent mind.

That matters because perception changes decision-making. Once leadership starts seeing a tool as a quasi-person, they ask the wrong questions. They stop asking what the system is constrained to do and start asking what it “knows.” They stop asking where it fails and start asking how many people it can replace. They stop evaluating a tool and start imagining a worker.

That is also why agentic coding gets marketed so aggressively. A demo that shows software reading files, proposing a plan, writing code, and opening a pull request creates a strong psychological impression of autonomy. It looks like a developer who never gets tired and never sends an invoice.

But perception is doing a lot of the work there. What you are often seeing is a probabilistic system operating inside a tightly framed environment with tools, prompts, retries, and guardrails prepared in advance. Useful? Often. Independent in the human sense? No.

Agentic coding usually means giving a model a coding objective plus access to code, tests, terminals, tools, and enough looped control to inspect, change, run, and revise without asking for permission on every step.

Here too, the apparent autonomy comes from the wrapper as much as the model. The coding tool stores the task, tracks files, chooses what context to send, decides which tools are available, captures command output, keeps the loop going, and presents the result as if one entity carried the whole thread. The language model is one component in that system, not the whole system.

That distinction matters for buyers and leaders because it tells you where the real work sits. If the surrounding software is weak, the “agent” will be weak. If the surrounding process is broken, the “agent” will inherit the breakage. There is no magic layer floating above the rest of the stack.

That is a meaningful change in tooling. It can save time. It can reduce friction. It can grind through repetitive work and expose options quickly.

It is not the same thing as replacing software development with autonomous magic.

The coding agent still inherits the quality of the environment around it. Weak tests, vague goals, fragmented architecture, bad data, sloppy review, and absent design discipline do not disappear. The agent simply moves through that mess faster. Sometimes much faster.

This is why the agentic coding pitch is often psychologically brilliant and operationally dishonest. It sells the image of a self-directed builder while quietly depending on human-created constraints, human-owned context, and human judgment at every layer that actually matters.

An agent is not judgment. It is not accountability. It is not organizational memory. It is not strategy. It is not a substitute for clean data, coherent processes, or competent leadership.

If your process is broken, an agent may help you break it faster. If your data is fragmented, the agent will inherit the fragmentation. If your teams do not trust the outputs, the shiny demo will die in production reality like every other unloved tool.

That is the uncomfortable test.

Take away the chatbot. Take away the AI branding. Take away the vendor deck and the launch announcement. What actually changed?

Did the company learn faster? Did it reduce coordination drag? Did teams make better calls with less waiting? Did customers get a genuinely better experience? Did the business unlock work that used to be too slow, too expensive, or too messy?

If yes, good. You are probably using AI for something real.

If no, then the company may not be using AI in any strategically meaningful way yet. It may simply be renting the costume.

That is not a moral failure. It is just better to name it honestly. Once you stop pretending the chatbot is the strategy, you can start looking for the places where AI actually matters.

And once you stop treating vendors’ language as strategy, you can ask the only question that matters: what changed in the business that would still matter after the demo glow wears off?

Let's talk about your real situation. Want to accelerate delivery, remove technical blockers, or validate whether an idea deserves more investment? I listen to your context and give 1-2 practical recommendations. No pitch, no obligation. Confidential and direct.

Need help? Practical advice, no pitch.

Let's Work TogetherVisibility and hands-on delivery

Navigator gives your leadership clear insight into patterns, blockers, and capacity. Our Developer Advocate writes production code with your team and gets delivery moving.