Cypress Beyond Testing: Executable Demos for Your Pipeline

What if every product demo you gave also served as a quality gate in your CI/CD pipeline? Cypress, traditionally posi...

10 min read

20.04.2026, By Stephan Schwab

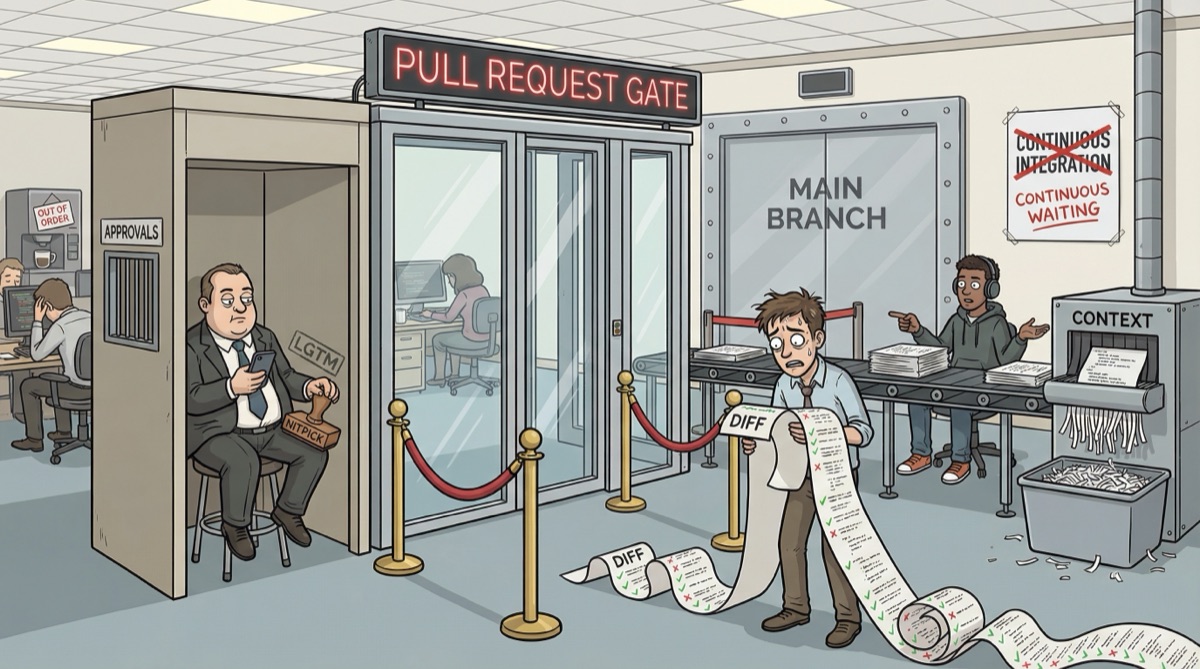

Pull requests were invented to let strangers contribute to open source projects maintained by people who had no reason to trust them. Somewhere along the way, companies adopted the same gatekeeping mechanism for teams that sit ten meters apart and share a coffee machine. The result is approval theater, rubber-stamp reviews, and a workflow that directly contradicts continuous integration. Your cohesive team doesn't need a gate. It needs a conversation.

Before GitHub existed, open source projects accepted contributions through mailing lists. You wrote a patch, formatted it according to the project’s conventions, sent it to the list, and waited. Maintainers you’d never met read your code, argued about it in public, and eventually accepted or rejected it. The Linux kernel still works this way. Linus Torvalds reviews patches from thousands of developers he’s never shared an office with. The process exists because trust doesn’t.

GitHub launched pull requests in 2008 to formalize this workflow. The pull request is a proposal: “I wrote something. Please review it before merging it into your project.” The key word is your. The contributor doesn’t own the codebase. The maintainer does. The pull request is a boundary between inside and outside.

This makes perfect sense for open source. When a stranger submits code to a project they don’t maintain, someone who understands the architecture should review it. The PR is a gate, and gates are useful when you don’t know who’s knocking.

Then companies looked at GitHub and thought: “Our developers should do this too.”

And that’s where things went wrong.

A cohesive team shares context. They know the codebase. They know the domain. They sat through the same meetings, argued about the same architectural decisions, and fixed the same production incidents at 02:00 on a Saturday.

Pull requests treat every contribution as if it comes from outside. Every change needs permission before it reaches the main branch. Every developer must wait for someone else to click a green button before their work counts.

On a team of five developers who pair regularly and talk about the code daily, this is overhead dressed up as quality. You hired these people. You trained them. You gave them access to production. But you don’t trust them to push to main without a permission slip?

The counterargument is always the same: “What if someone makes a mistake?” Good question. But the PR isn’t catching the mistake. A study by Microsoft Research found that the primary motivation for code reviews at Microsoft was knowledge dissemination, not defect detection. The overwhelming majority of review comments are about code style and conventions, not bugs. The bugs that matter, the subtle logic errors, the race conditions, the misunderstood business rules, those sail through reviews because reviewers spend an average of a few minutes looking at a diff they didn’t write and don’t fully understand.

Here’s what pull request reviews look like on most teams. The PR opens. Somewhere between three minutes and six minutes later, someone clicks approve. The comment says “LGTM” or contains a thumbs-up emoji. No questions asked. No alternative approaches suggested. No evidence that the reviewer read anything beyond the PR title.

I’ve seen two tracks in practice. The fast track: approval in under five minutes for diffs spanning hundreds of lines. Nobody reads two hundred lines of code in five minutes. The reviewer glances at the title, maybe skims the file list, clicks approve. No checkout, no local testing, no understanding. It’s a fire emoji and a green checkmark. The slow track: someone actually checks the branch out, runs it locally, tries to understand what changed and why. That takes a day. A full working day lost to reviewing someone else’s batch of changes. Neither track works. The fast one catches nothing. The slow one destroys throughput. Both pretend to be quality control.

The dangerous part isn’t the lack of attention. The dangerous part is the confidence it creates. The team believes it has a review process. The PR history shows approvals, comments, green checkmarks. Managers point to it in audits. “We have mandatory code review.” That sentence creates the illusion that someone is catching problems. Nobody is catching problems. The process catches nothing. But it looks like it does, and that’s worse than having no process at all, because at least with no process you know you’re exposed.

When a production incident happens, the post-mortem looks at the commit, finds the PR, sees the approval, and concludes: “The review process worked. This was an edge case nobody could have caught.” Nobody asks the obvious question: did the reviewer actually read the code? The answer is almost always no. But the green checkmark says yes, and the green checkmark is what goes in the report.

We use AI code generation every day. It’s genuinely powerful. Write a failing test, let the AI propose an implementation, evaluate the result, adjust, commit. TDD with an AI pairing partner on trunk-based development creates tight feedback loops that produce solid code fast. The AI suggests, you question, you refine together. The discipline hasn’t changed. The principles are decades old. The tool is new.

The problem isn’t AI. The problem is what happens when AI-generated code meets pull request workflows.

Now that everyone proclaims “coding is a solved problem,” the volume of code flowing through pull requests is exploding. Developers who used to write fifty lines a day generate three hundred. The diffs are longer. The code looks plausible. It passes linting. It might even have tests, also generated, also unread.

Nobody was reading the code before. Now there’s three times more of it.

AI-generated code looks confident. Syntactically clean. Reasonable variable names. Comments included. It has the shape of code written by someone who knows what they’re doing. When you work with it in dialogue, test-first, you catch the gaps immediately: the edge cases it missed, the business rules buried in a Slack conversation from eight months ago, the reason that particular null check exists. The tight feedback loop of red-green-refactor doesn’t let hallucinations survive.

But drop that same code into a pull request? The reviewer clicking approve in four minutes doesn’t catch any of that. Before AI, at least the developer who wrote the code understood it. You could walk over and ask. The PR workflow now has code that was generated without full context, submitted without conversation, and approved without understanding. The green checkmark covers all three gaps equally.

AI as a pairing partner works. AI as a code cannon firing into a PR queue doesn’t. The difference isn’t the AI. It’s whether anyone is in dialogue with the code before it ships.

Continuous integration means every developer integrates their work into the main codebase multiple times per day. Not once a day. Not at the end of a feature. Multiple times per day. Small changes, frequently integrated, immediately tested.

Pull requests work against every element of that definition.

A pull request creates a branch. The branch diverges from main. The longer the branch lives, the further it drifts. Every hour that branch exists without being merged is an hour of integration risk accumulating. Other developers are changing the same codebase. Merge conflicts grow. Assumptions rot.

Then the PR sits in a queue. Waiting for a reviewer. The reviewer is busy. They have their own PRs to submit and their own work to do. The average PR at many organizations waits hours. Sometimes days. Some teams have PR queues measured in weeks.

During that wait, the developer who submitted the PR starts new work. Now they have two mental contexts: the work they’re doing and the work that’s waiting. When the review comes back with comments, they context-switch. They’ve moved on. The code is yesterday’s problem with today’s interruption.

Trunk-based development eliminates all of this. Everyone works on main. Commits are small. Integration happens continuously. There is no branch. There is no queue. There is no gate. The feedback loop is tight: write, test, commit, push. If something breaks, you know immediately because the test suite runs on every push.

“But what about unfinished features?” Feature flags. You deploy code that’s off by default and enable it when it’s ready. The code is integrated, tested, and in production. It’s just not visible to users yet. No branch. No PR. No queue.

The real value of looking at someone’s code isn’t catching bugs. Automated tests, linters, and static analysis catch bugs more reliably than a human scanning a diff at 16:45 on a Friday. The real value is shared understanding. Two people looking at the same code develop a shared mental model of how the system works. They transfer context. They build collective ownership.

Pull requests are terrible at this. Reading a diff in a browser tab is the worst possible way to understand someone’s work. You see lines changed. You don’t see the decisions that led to those changes. You don’t hear the reasoning. You don’t experience the tradeoffs. You approve in isolation what was created in isolation. No understanding is transferred. No context is shared.

Pair programming does what code reviews claim to do. Two developers sit together and write the code together. Review happens in real time. Questions get asked when they matter, during creation, not after. Design decisions are discussed while alternatives are still cheap. One person writes the test, another writes the implementation. Understanding flows in both directions continuously.

Mob programming extends this further. The whole team works on the same code at the same time. Review is embedded in creation. There is no separate review step because the review never stopped.

These approaches feel slower. They’re not. Pair programming produces fewer defects, which means less rework. Shared understanding reduces knowledge silos, which means fewer “only Tomasz knows how this works” situations. When the developer who wrote the circuit breaker leaves, four people understand the circuit breaker because they wrote it together. Not because someone left a thumbs-up on a diff.

PRs aren’t useless. They’re misapplied.

Use pull requests when an external contributor submits code to a project they don’t maintain. That’s the original use case, and it still works.

Use them when a new team member is learning the codebase and wants explicit feedback. Make it temporary. Graduate them to trunk-based development as they build confidence.

Use them for regulated environments where audit trails require documented approvals. But be honest about what that audit trail contains. It contains signatures, not understanding. If your compliance framework requires evidence of code review, consider whether pairing session logs satisfy that requirement better than rubber-stamped PRs.

Don’t use them as a substitute for trust. If you trust your team enough to give them production access, trust them enough to push to main.

Stop reviewing code after it’s written. Start discussing code while it’s being written. Pair. Mob. Talk about what you’re building and why. Make understanding the default and isolation the exception. And use AI to question the code itself: ask it to find edge cases you missed, challenge your assumptions, explain what a function actually does versus what you think it does. AI is a relentless reviewer that never gets tired, never rubber-stamps, and never clicks approve out of social obligation. Point it at your code and say “what could go wrong here?” You’ll get more useful feedback in thirty seconds than most PRs deliver in a day.

Continuous integration requires continuous conversation. A pull request is a delayed, asynchronous, isolated monologue. Replace it with the real thing: developers working together, building shared understanding one commit at a time.

Your team was never a collection of strangers. Stop treating them like one.

Let's talk about your real situation. Want to accelerate delivery, remove technical blockers, or validate whether an idea deserves more investment? I listen to your context and give 1-2 practical recommendations. No pitch, no obligation. Confidential and direct.

Need help? Practical advice, no pitch.

Let's Work TogetherA senior developer for your team

Our Developer Advocate writes production code with your team, improves the pipeline, and accelerates delivery. 60-70% coding, 30-40% coaching. A temporary teammate who ships from day one.